Difference between revisions of "Webapps with python"

| Line 15: | Line 15: | ||

==== What is this and how it works==== | ==== What is this and how it works==== | ||

Using Natural Language Processing to 'Geoparsing' google scholar search results. Select a keyword (or more) and see where the publications refer to. Click on a bubble of a country to see its publications listed below the map. | Using Natural Language Processing to 'Geoparsing' google scholar search results. Select a keyword (or more) and see where the publications refer to. Click on a bubble of a country to see its publications listed below the map. | ||

====Background==== | |||

Python provides all the tools needed to do Natural Language Processing, including | Python provides all the tools needed to do Natural Language Processing, including | ||

* Web scraping e.g. [BeautifulSoup](https://pypi.org/project/beautifulsoup4/) | * Web scraping e.g. [BeautifulSoup](https://pypi.org/project/beautifulsoup4/) | ||

| Line 21: | Line 21: | ||

* Flag geographical locations mentioned in the text and geolocating them (Geoparsing) | * Flag geographical locations mentioned in the text and geolocating them (Geoparsing) | ||

e.g. [Mordecai](https://github.com/openeventdata/mordecai), [geography3](https://pypi.org/project/geograpy3/) and | e.g. [Mordecai](https://github.com/openeventdata/mordecai), [geography3](https://pypi.org/project/geograpy3/) and | ||

(simple)[geotext] (https://pypi.org/project/geotext/). | (simple)[geotext] (https://pypi.org/project/geotext/). | ||

====What it does==== | ====What it does==== | ||

Revision as of 11:23, 29 April 2021

Webapps with python

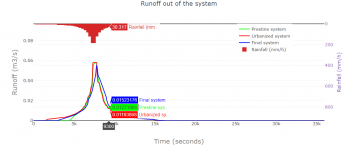

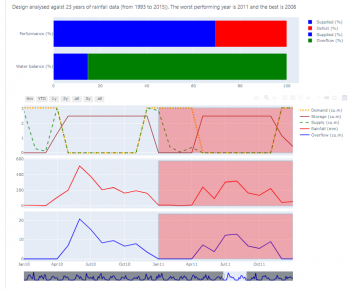

For a long time, the tradition of providing technical solutions was the experts to do the designs and implement the designs for the benefit of the society. At a later stage, we started practising ‘awareness raising’. While this was a step in the right direction, by attempting to describe the why’s what’s and how’s of a technical solution to the societal stakeholders, awareness-raising often worked as an afterthought. A large body of evidence has shown that the best outcomes can be achieved by involving the community from day one of a technical solution. This day one is the planning and design stage. Whether it is money management in a family, a piece of policy in a government or an institution or any type of technical solution, best stakeholder support is obtained when people co-own the product. The journey to co-ownership starts with co-discovery (of knowledge), co-design and implementing together.

This applies to any type of technical solution. However, it becomes a non-negotiable requirement for success in climate and nature-based solutions. By their very nature, both the problem and its solutions are distributed in nature. Delivery of electricity from a customer from a thermal power plant also involves dealing with the ‘users’ – we call this customer management. But when we go for household level, grid-connected, solar electricity generation, that customer must become a business partner! That is the transformation we are witnessing in many sectors addressing problems with climate and nature-based solutions. This is how people are empowered to do designs. The pandemic is a portal, to make the wrongs right, and to build back better and greener.

One of the surefire ways of creating co-ownership is to encourage co-discovery and co-design. For this, we face the challenge of bringing modern technological knowledge to the stakeholders, including communities, in an understandable way but yet allowing for them to interact and contribute in a meaningful sense. One of the modern tools that contribute to this mission is interactive web applications. They allow water managers and scientists to bring complex data analysis solutions, big-data technologies, dynamic water models closer to the non-specialist stakeholders in an appealing and simple-to-interact fashion.

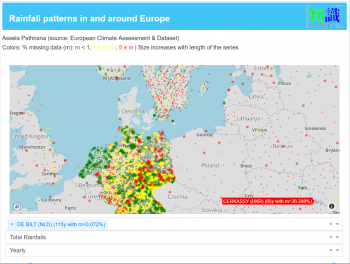

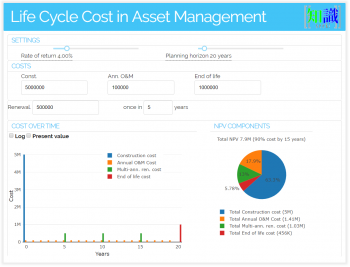

Demonstrations

Here are some prototype web applications that were created for the water management, agriculture and asset management sectors. They are written in python using libraries like Plotly dash and web2py to do the frontend. I use docker containers based on dokku -- a PaaS (Platform as a Service) --to host these apps.

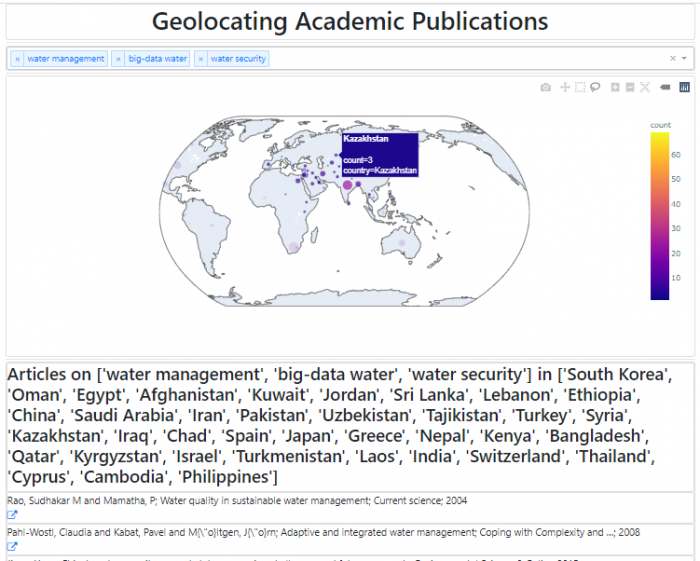

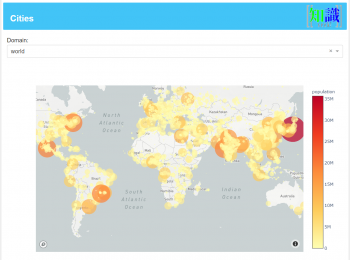

Geohacking publications with Python Natural Language Processing and Friends

What is this and how it works

Using Natural Language Processing to 'Geoparsing' google scholar search results. Select a keyword (or more) and see where the publications refer to. Click on a bubble of a country to see its publications listed below the map.

Background

Python provides all the tools needed to do Natural Language Processing, including

- Web scraping e.g. [BeautifulSoup](https://pypi.org/project/beautifulsoup4/)

- Parsing and identifying entities e.g. [NLTK toolkit](https://www.nltk.org/)

- Flag geographical locations mentioned in the text and geolocating them (Geoparsing)

e.g. [Mordecai](https://github.com/openeventdata/mordecai), [geography3](https://pypi.org/project/geograpy3/) and (simple)[geotext] (https://pypi.org/project/geotext/).

What it does

- Downloads Google Scholar search hits for each keyword (In this demo, I have limited each to 500 top hits, to keep things simple)

- Store them in a NoSQL database (MongoDB)

- Run a geoparser (geotext in this case) to locate mentions of countries in the title or the abstract.

- Feed the data to this app, so that the user can interactively look at them.

How to use

- (After closing these instructions) Select a keyword. The locations of the publications will be shown on the map. A list of all the publications will be shown below the map.

- Click on the bubbles on the map to filter by country. Then the list will be updated to cover only that country.

- It is possible to select more than one keyword (simply select from the dropdown list)

- It's also possible to select several countries. Either SHIFT+Click on the map or use the select tools (top-right).

- Click on the link below each record to see it on google scholar.

What is missing

- Many publications concerning the United States of America, typically does not write the country name (e.g. A statewide assessment of mercury dynamics in North Carolina water bodies and fish).

NLP tools are usually smart enough to detect these (North Carolina is in the USA so tag as 'USA'), but the current (demo) implementation misses some obscure names.

- 'The United Kingdom vs. England' tagging is complicated. This issue has to be fixed (That's why no articles are tagged for England).

Next step?

This demo provides a framework for Natural Language Processing of online material to make sense of information (e.g. geoparsing). It combines several Big-data constructs (Unstructured data, NoSQL (Jason) data lakes, NLP tricks). While a web app is not the right place to scale up these to the big-data level, the framework presented here can easily be implemented to do large-scale processing using a decent cluster computer system.

With large scale applications some of the possibilities are:

- Identify temporal trends in publications.

- Locate 'hotspots' as well as locations with few (or no) studies (geographical gaps)

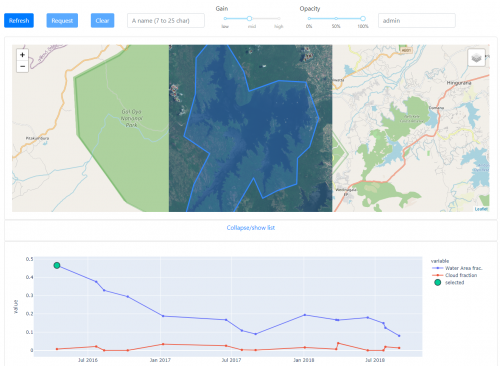

Seasonal levels in waterbodies using Sentinel-2 data

End-to-end automated system for calculating seasonal water availability in practically any waterbody.